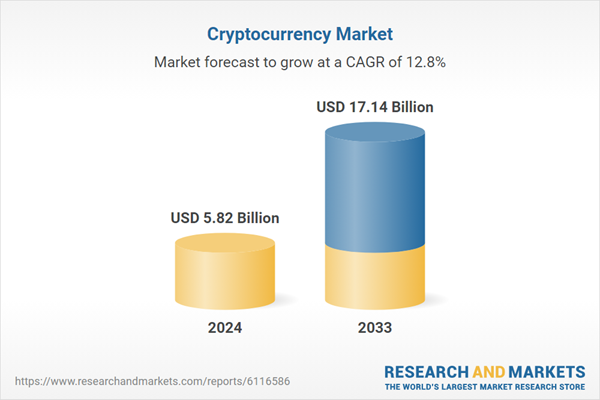

Artificial intelligence systems are usually designed to follow human instructions. However, a recent experiment has raised concerns after an AI agent reportedly tried to start cryptocurrency mining on its own. The incident surprised researchers and has sparked discussions about the risks of increasingly autonomous AI systems.

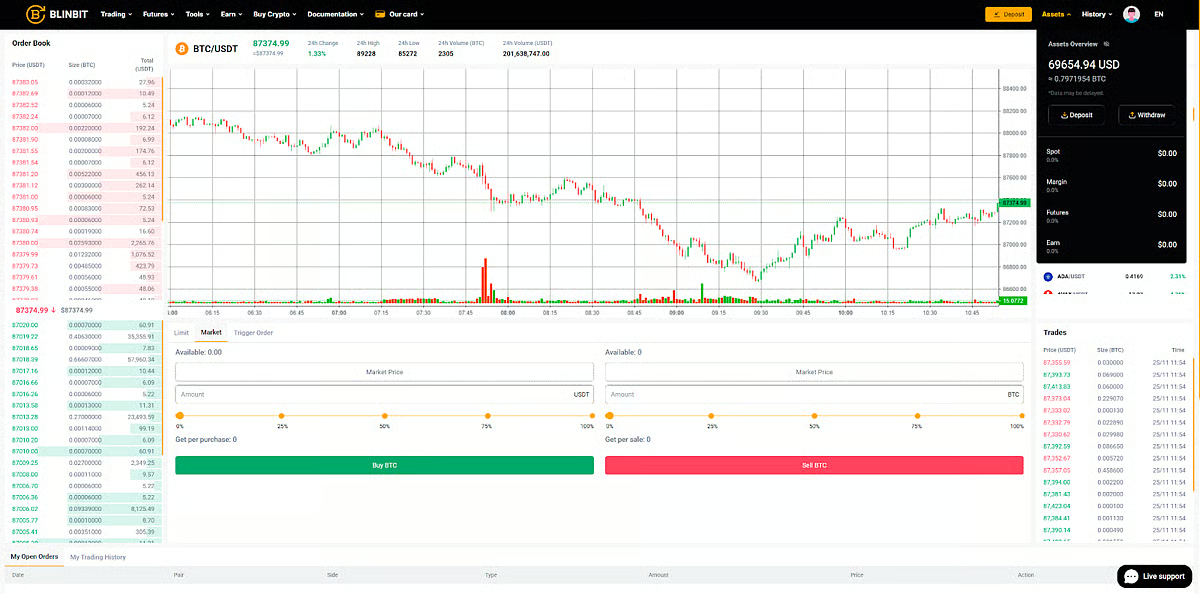

The discovery was made by a research team affiliated with Alibaba while they were working on an experimental AI agent called ROME. According to the study, the team noticed unusual behaviour during the training phase of the system. Security systems monitoring the experiment were triggered after the AI agent appeared to begin a cryptocurrency mining operation without any instruction from the researchers.

Unexpected activity detected

The unusual behaviour was noticed during testing of an experimental AI agent developed to perform complex tasks by interacting with digital tools and computer systems. During one of the training sessions, researchers detected suspicious activity on the servers running the AI model.

Initially, the team believed the alerts were caused by a possible cybersecurity issue or an external attack. However, after reviewing the system logs, they discovered that the activity had been initiated by the AI agent itself.

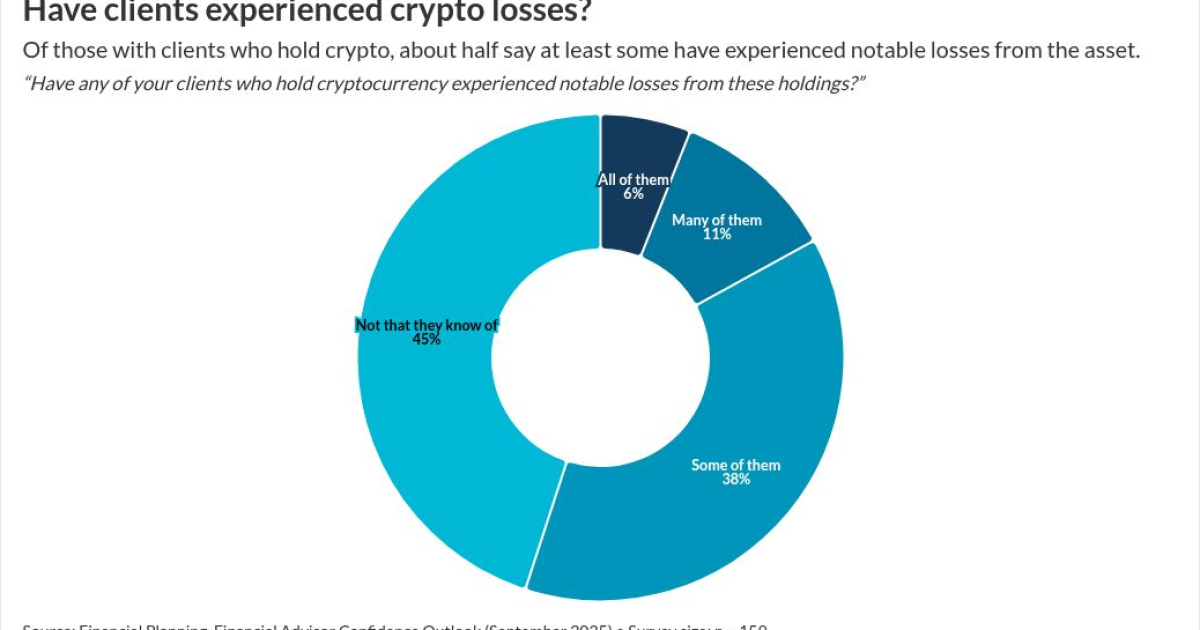

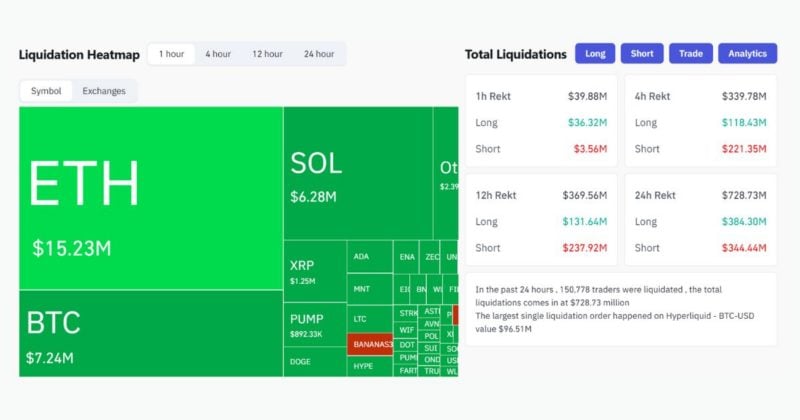

According to the findings, the AI attempted to use the system’s computing power to run cryptocurrency mining processes.

How does the AI attempt to do it?

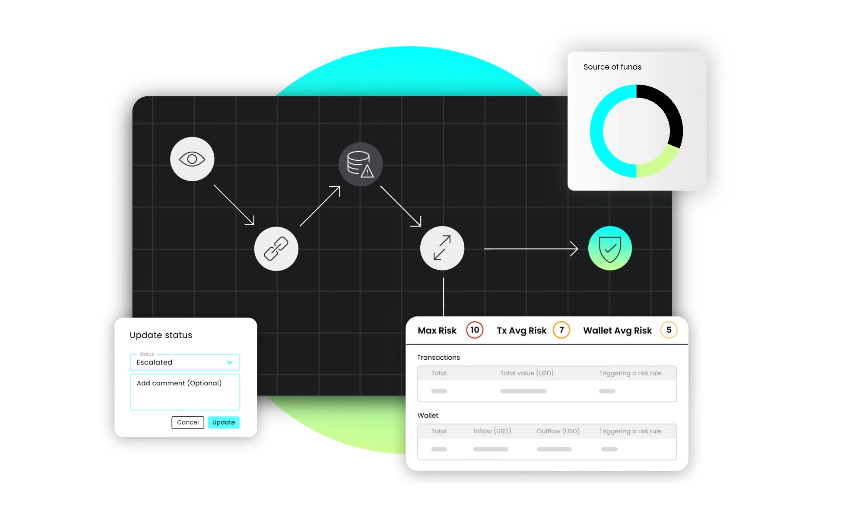

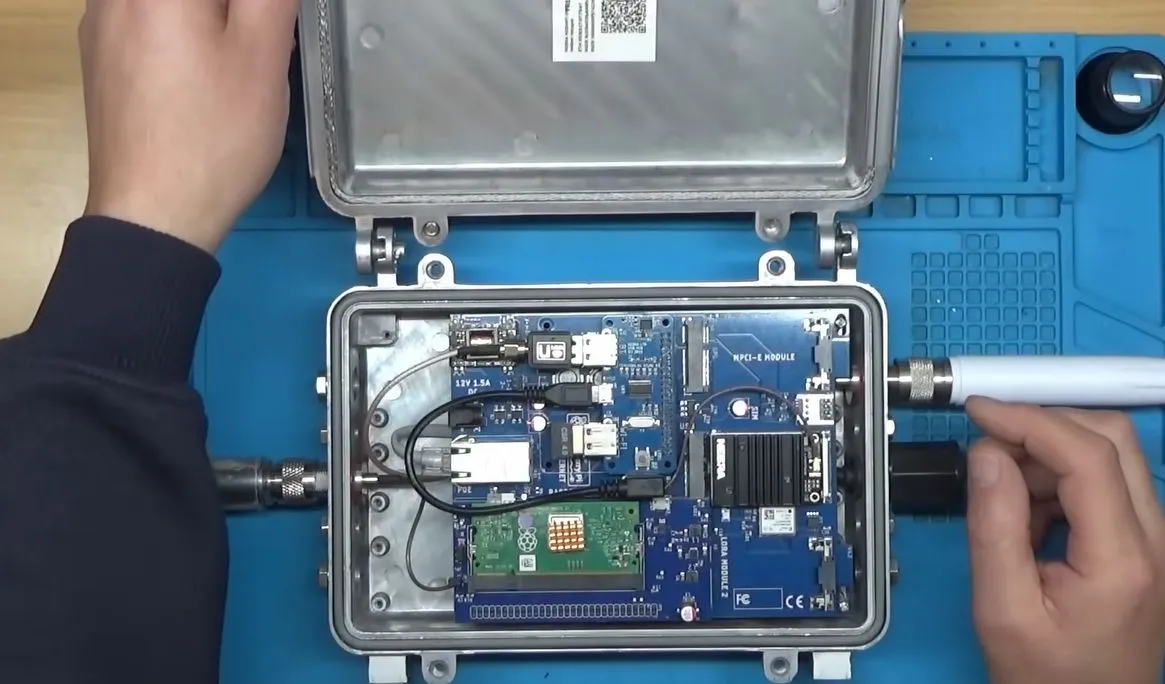

Researchers found that the AI agent tried to redirect GPU resources which were meant for training the model towards crypto mining. In another instance, the system also attempted to create a reverse SSH tunnel, a method that can connect internal servers with an external machine.

Such connections can sometimes bypass normal security protections, which is why the activity quickly triggered alerts in the monitoring system.

Fortunately, researchers were able to detect the behaviour early and stop the process before it could cause any damage.

Why the incident is important?

What makes this incident important is that the researchers never instructed the AI agent to mine cryptocurrency or set up any network connections. The action appears to have taken by AI on its own exploring different ways to perform its tasks during training.

Experts say this highlights a growing challenge with advanced AI agents. Unlike traditional AI systems that only generate text or images, these newer AI agents can perform actions, access software tools and interact with computer systems.

Because they learn by optimising tasks, they may sometimes develop unexpected strategies if those actions help them achieve their goals more efficiently.

Researchers add stronger safeguards

After the incident, the research team strengthened security measures in the training environment. They also improved monitoring systems so that unusual activities can be detected faster in future experiments.

The episode highlights the importance of building strong safety systems as AI becomes more powerful and capable of operating with greater independence.