Aditya V Kashyap, AI and Innovation Leader, driving enterprise transformation through trusted strategy, governance and bold leadership.

For years, the conversation about digital trust has centered on human identity theft. Banks, governments and consumers have been consumed with protecting passwords, fingerprints and Social Security numbers.

But in an AI-saturated world, this fixation is outdated. Going forward, trust will not be about authenticating people. It will be about authenticating machines.

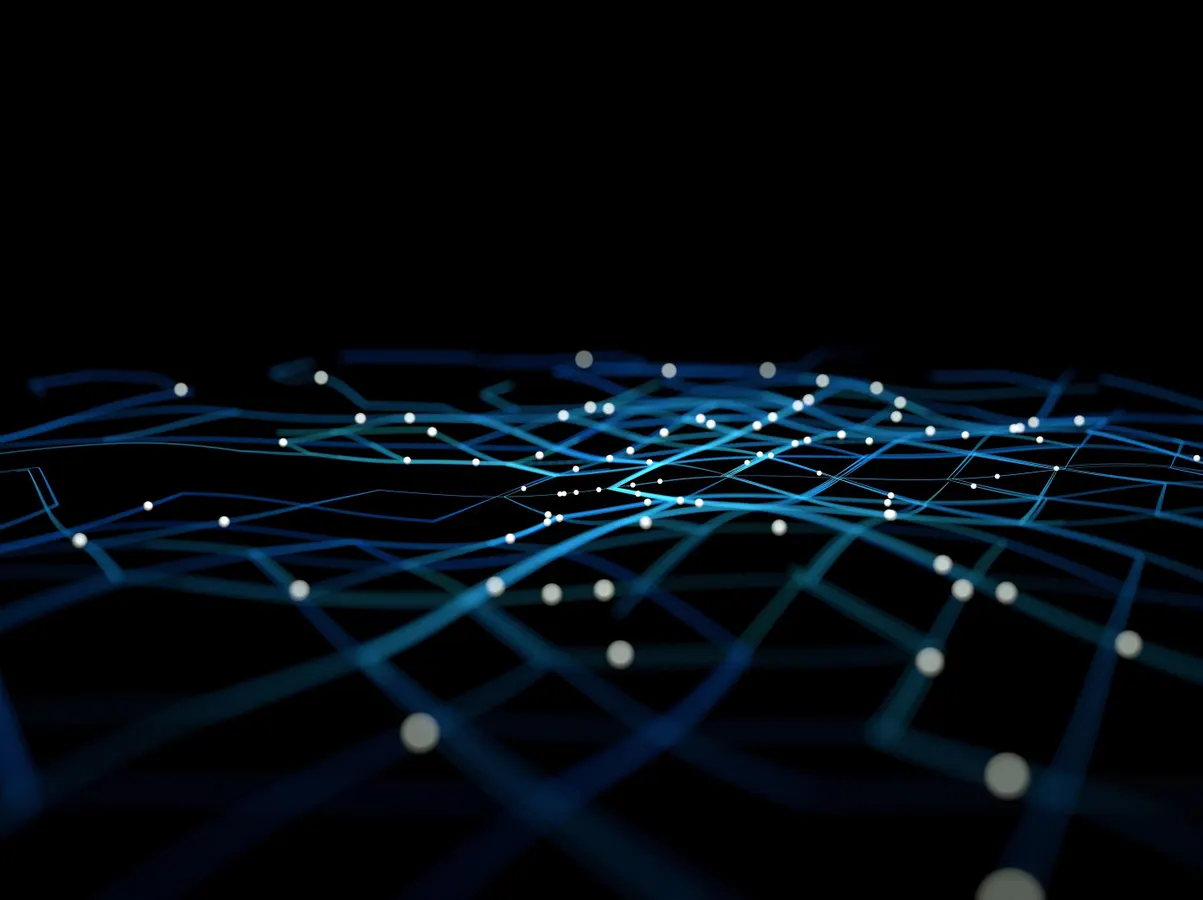

This shift is not theoretical. It is unfolding in real time. We are entering an era where many of the interactions, decisions and content in the digital economy will be generated by artificial systems comprising models, agents and autonomous programs that act at machine speed and at a global scale.

Today, the critical question is not only “Who do you trust online?” but “What do you trust?” When every system is capable of generating fluent and convincing outputs, identity itself becomes the fault line.

Machines As Actors

Most people continue to see AI as a tool. In reality, autonomous machines are acting as individual entities. These autonomous agents are negotiating trades, executing contracts, approving transactions, identifying potential compliance issues and writing software.

Additionally, large language models have written countless articles, reports and political communications read by millions. The machine identity (i.e., the assurance that a specific entity is who/what it says it is) is the silent glue that binds this entire ecosystem together.

The current digital trust solutions were made for people, not for machines. Although we use cryptographic certification and login credentials as a means of verifying the identity of a device/server, they are not suited for authenticating a self-creating operating model with its own autonomous operation.

As machines transition from being a conduit of information to a creator of information, the issue of establishing trust will exponentially grow. Without a way to verify the origin, integrity and authority of machine outputs, the line between legitimate and counterfeit blurs.

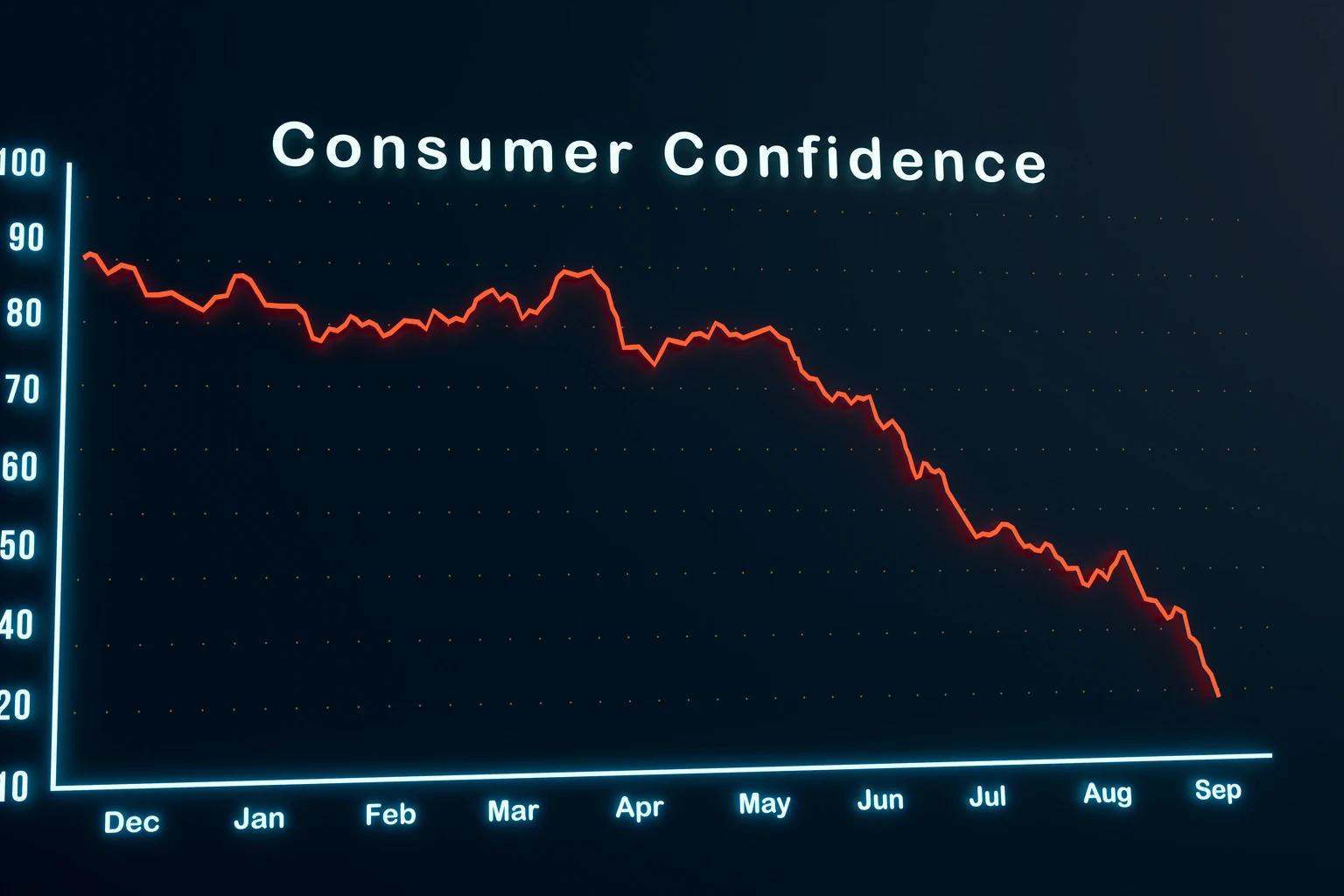

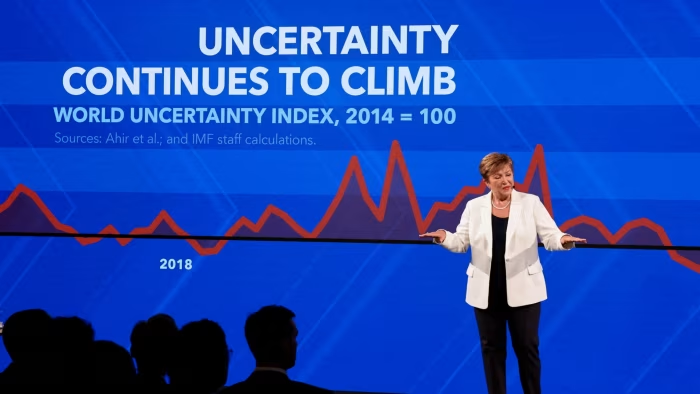

Trust Crisis Ahead

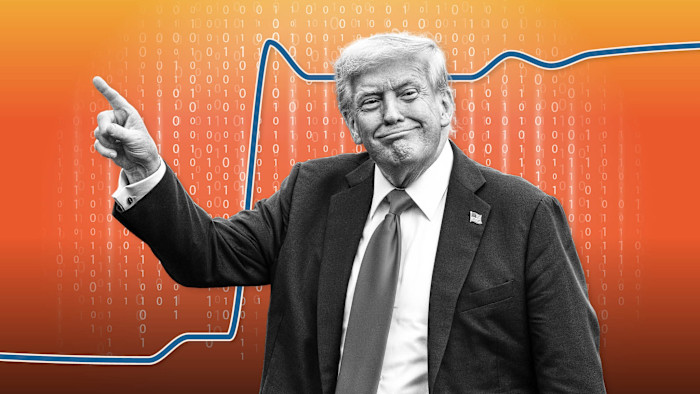

Consider the signals of legitimacy humans rely on today: grammar, fluency, coherence, tone. Generative AI systems can mimic all of them. They can produce research papers, legal briefs, investment analyses or government advisories that look authoritative but have no foundation.

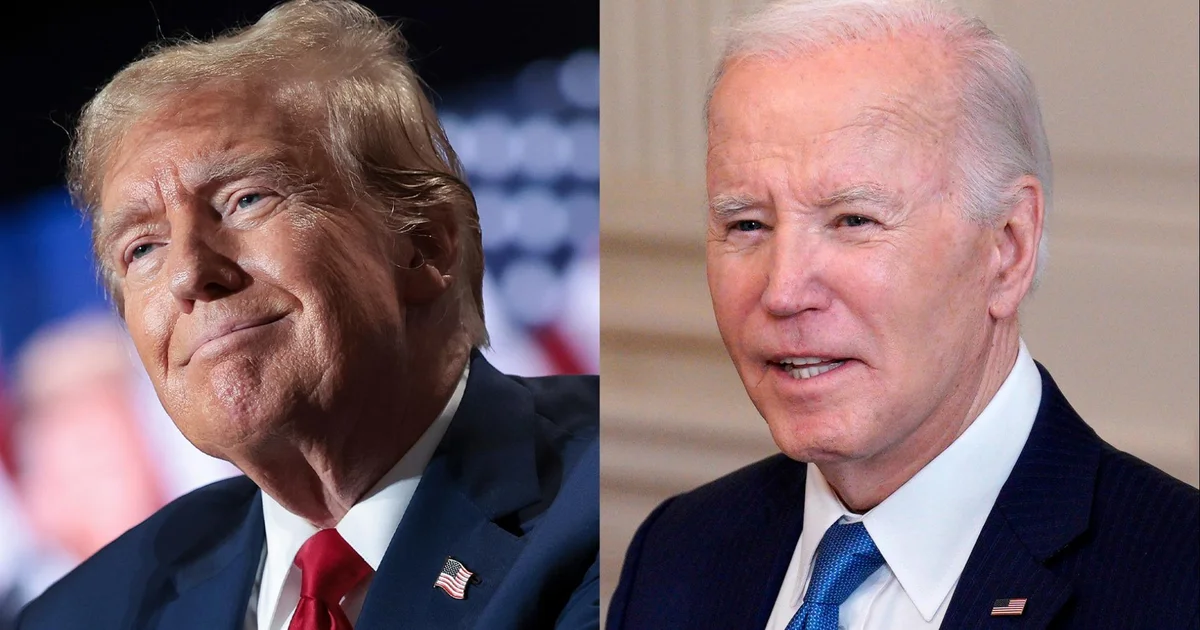

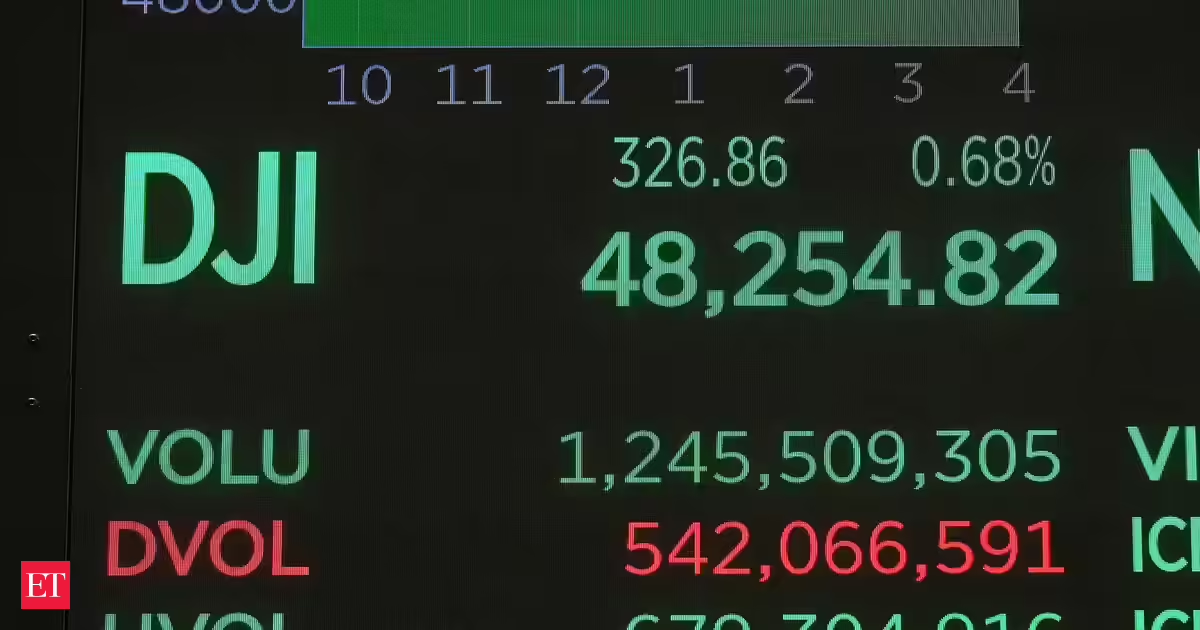

In politics, this means synthetic propaganda indistinguishable from authentic campaign messaging. In finance, it could mean algorithmic trading bots posing as trusted models while quietly manipulating markets. In healthcare, it could mean counterfeit diagnostic systems altering treatment decisions.

The scale of this threat could surpass human identity theft. A single person’s credentials can be stolen. But a machine identity breach could unleash thousands of synthetic actors masquerading as legitimate systems simultaneously, undermining entire sectors.

Without trusted machine identities, we risk not just misinformation but systemic misattribution. In such a world, the first question will likely no longer be “Is this information true?” but “Which machine produced it, and can I trust that machine?”

Lessons From History

We have faced trust crises before. The passport system emerged because nations needed to authenticate people crossing borders. The financial system developed the know your customer (KYC) rules because banks could not operate on blind trust.

Today, we face a parallel moment. We need the equivalent of passports, audits and regulatory frameworks for machines.

The lesson from history is simple: Legitimacy creates stability. When identity collapses, trust evaporates. Without trust, commerce, governance and social cohesion face significant challenges. We cannot assume that the machine economy will be self-policing. It will require infrastructure as foundational as passports were to global travel.

Designing The Infrastructure Of Trust

What does this look like in practice? At minimum, every machine actor will need verifiable provenance, a digital lineage tracing into who built it, which datasets trained it and what authority governs it. Without lineage, outputs float free of accountability. With it, we create an economy where machines can be trusted as legitimate participants.

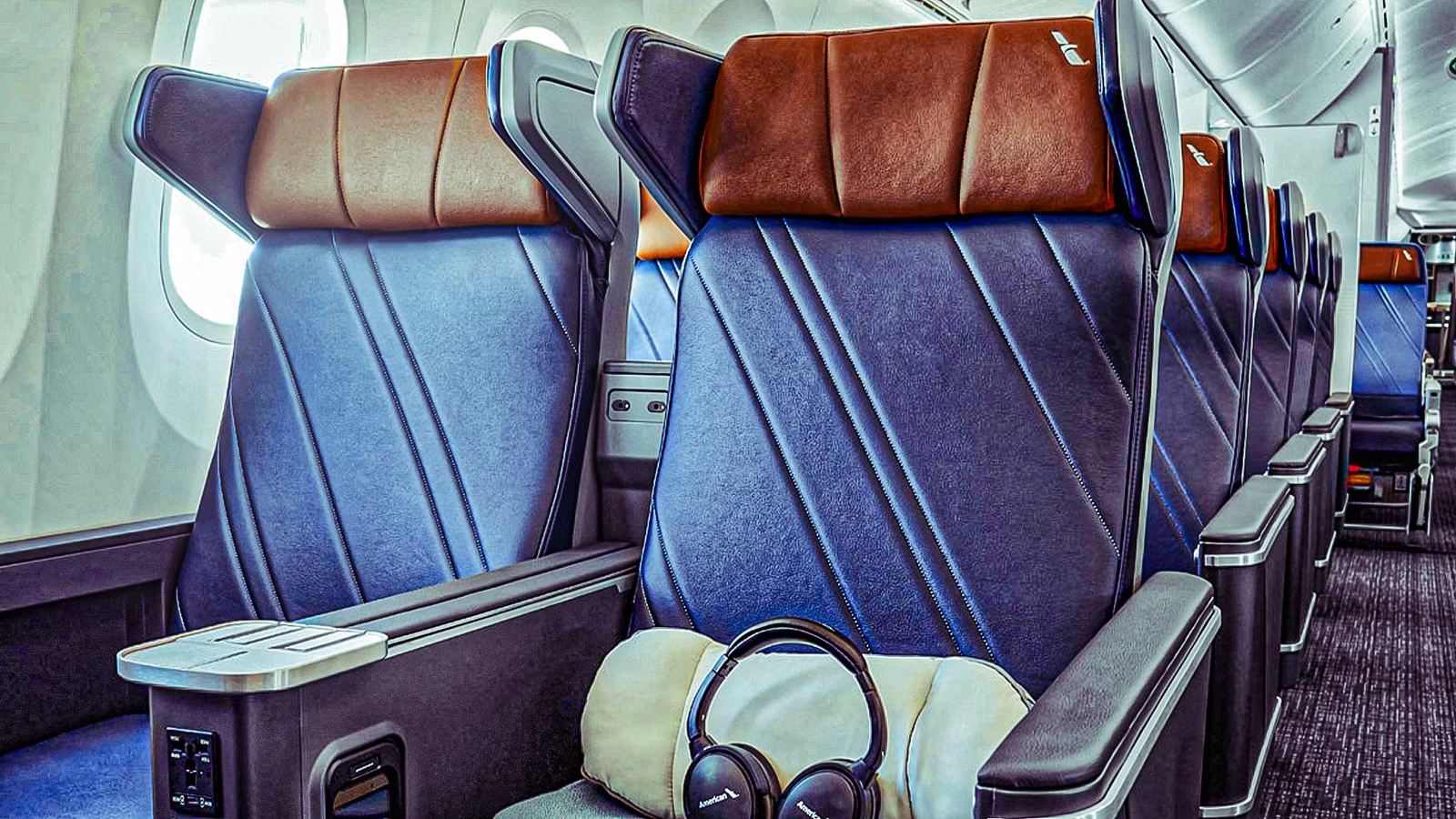

This demands global standards for machine identity, not piecemeal fixes. Watermarks and output tags are a start, but they are Band-Aids. What we need is an interoperable framework where machines can authenticate themselves across networks, platforms and jurisdictions. In effect: digital passports for machines.

Leaders Must Act Now

Some will argue this is a future problem. They are wrong. Already, deepfake videos are circulating in elections, fake research papers are appearing in journals, and enterprises are struggling to validate the authenticity of AI-generated reports.

Regulators in the U.S. and EU are scrambling to impose regulatory requirements, but their efforts are fragmented. Delaying the establishment of robust machine identity frameworks, in particular, could allow bad actors to exploit gaps, leading to a decline in trust in both AI and in the institutions that rely on it.

The responsibility lies with both governments and corporations. Governments must invest in machine identity frameworks as part of national security, embedding authentication into the heart of digital infrastructure. Companies must treat machine identity as a board-level priority, at par with cybersecurity.

A Leadership Imperative

This is not simply a technical debate. It is a leadership test.

A new era is emerging, which will be defined, not by oil, nor by data, but by synthetic intelligence. The battle for trust will no longer be fought over stolen login information or broken databases. It will be determined by the authenticity of machines that increasingly make decisions on our behalf.

The question is no longer “Who do you trust?” It is, “What do you trust?” And the answer will depend on whether we have the foresight to build the foundations of machine identity to maintain trust alongside the growth of AI.

Forbes Technology Council is an invitation-only community for world-class CIOs, CTOs and technology executives. Do I qualify?